Blog posted on 10th May 2026

AI Penetration Testing Explained: Risks, Methods and Benefits

Introduction:

Artificial intelligence is now part of everyday business operations. From automation tools to advanced data systems, organisations are relying on AI to improve efficiency and decision making. But as adoption grows, so do the risks. Traditional security methods are no longer enough to protect these systems.

This is where AI penetration testing becomes essential. It helps identify vulnerabilities within AI systems before attackers can exploit them. By understanding how these risks develop and how they can be tested, businesses can build stronger and more secure environments.

Why AI Security Testing Is Critical Today

The rise of AI has introduced new opportunities, but it has also created new security challenges. AI systems process large volumes of data and make automated decisions, which means any weakness can have serious consequences.

New attack surfaces are emerging as organisations integrate machine learning models into their systems. These include data pipelines, APIs, and model behaviour itself. Unlike traditional systems, AI environments are dynamic and constantly evolving, making them harder to secure.

AI security testing is critical because it addresses these modern risks. Without it, vulnerabilities can go unnoticed, leaving systems exposed to attacks that traditional penetration testing may not detect.

What Is AI Penetration Testing

AI penetration testing is a specialised form of security testing that focuses on identifying weaknesses in artificial intelligence and machine learning systems. It simulates real world attacks to understand how these systems respond under pressure.

Unlike standard penetration testing, which mainly targets networks or applications, AI penetration testing takes a lifecycle approach. This means it assesses every stage of the AI system, from data collection and training to deployment and operation.

By testing the entire lifecycle, organisations can identify risks that may exist within data, models, or system integrations. This approach ensures that security is not limited to one area but covers the full scope of the AI environment.

AI vs Traditional Pen Testing

AI penetration testing differs significantly from traditional methods. Conventional testing focuses on known vulnerabilities within infrastructure, such as servers or applications. It follows structured processes that work well for static systems.

AI systems, however, behave differently. They learn from data, adapt over time, and can respond unpredictably. This creates new types of vulnerabilities that traditional testing cannot easily detect.

Another limitation of traditional testing is its scope. It often overlooks risks within data and model behaviour. AI penetration testing addresses this gap by focusing on how models process inputs, make decisions, and respond to unusual scenarios.

Understanding these differences highlights why AI specific testing is necessary in modern cybersecurity strategies.

Common AI Security Risks

Data Poisoning

Data poisoning occurs when attackers manipulate training data to influence how a model behaves. By introducing corrupted or misleading data, they can cause the system to produce incorrect outputs. This type of attack is particularly dangerous because it affects the model at its core.

Prompt Injection

Prompt injection targets systems that rely on user inputs, especially large language models. Attackers craft specific inputs to bypass controls or force the system to behave in unintended ways. This can lead to data exposure or unauthorised actions.

Model Theft

Model theft involves extracting sensitive information from a trained model. Attackers may attempt to replicate the model or gain access to proprietary data used during training. This not only affects security but also impacts intellectual property.

Adversarial Attacks

Adversarial attacks involve subtle changes to input data that can mislead AI systems. These changes may be difficult to detect but can cause significant errors in output. This highlights the importance of testing how models respond to unexpected inputs.

Techniques Used in AI Pen Testing

Red Teaming

Red teaming involves simulating real world attacks to test the resilience of AI systems. Security professionals take on the role of attackers to identify weaknesses and assess how systems respond under pressure. This approach provides valuable insights into potential vulnerabilities.

Vulnerability Simulation

Vulnerability simulation focuses on testing specific weaknesses within AI environments. This includes analysing data flows, APIs, and model interactions. By simulating different attack scenarios, organisations can identify areas that require improvement.

Model Stress Testing

Model stress testing examines how AI systems perform under extreme or unusual conditions. This includes testing with unexpected inputs or high volumes of data. The goal is to identify weaknesses that may not appear during normal operation.

Benefits of AI Penetration Testing

AI penetration testing offers several important benefits. One of the main advantages is risk reduction. By identifying vulnerabilities early, organisations can prevent security incidents before they occur.

Compliance is another key benefit. Many industries require strict data protection and security standards. AI security testing helps organisations meet these requirements and demonstrate their commitment to cybersecurity.

Resilience is also improved through continuous testing. AI systems become more robust as vulnerabilities are identified and addressed. This leads to better performance and greater confidence in the system.

Challenges in AI Security Testing

Despite its benefits, AI penetration testing presents challenges. One of the main issues is complexity. AI systems are made up of multiple components, including data, models, and integrations. Testing each part requires specialised knowledge.

Evolving threats add another layer of difficulty. Attack methods continue to change, and new vulnerabilities are constantly emerging. This makes it essential for organisations to stay updated and adapt their testing strategies.

There is also a skills gap in the industry. Effective AI penetration testing requires expertise in both cybersecurity and machine learning. Finding professionals with this combination of skills can be challenging.

Best Practices for AI Pen Testing

To implement AI penetration testing effectively, organisations should follow a structured approach. The first step is to understand the AI systems in use. This includes identifying data sources, models, and integrations.

Testing should be integrated into the development process rather than treated as a final step. Continuous monitoring helps identify issues early and reduces the risk of major failures.

Combining automated tools with human expertise is also important. While automation improves efficiency, human insight is essential for understanding complex scenarios and making strategic decisions.

Clear processes for reporting and addressing vulnerabilities should be established. This ensures that identified risks are managed effectively and do not remain unresolved.

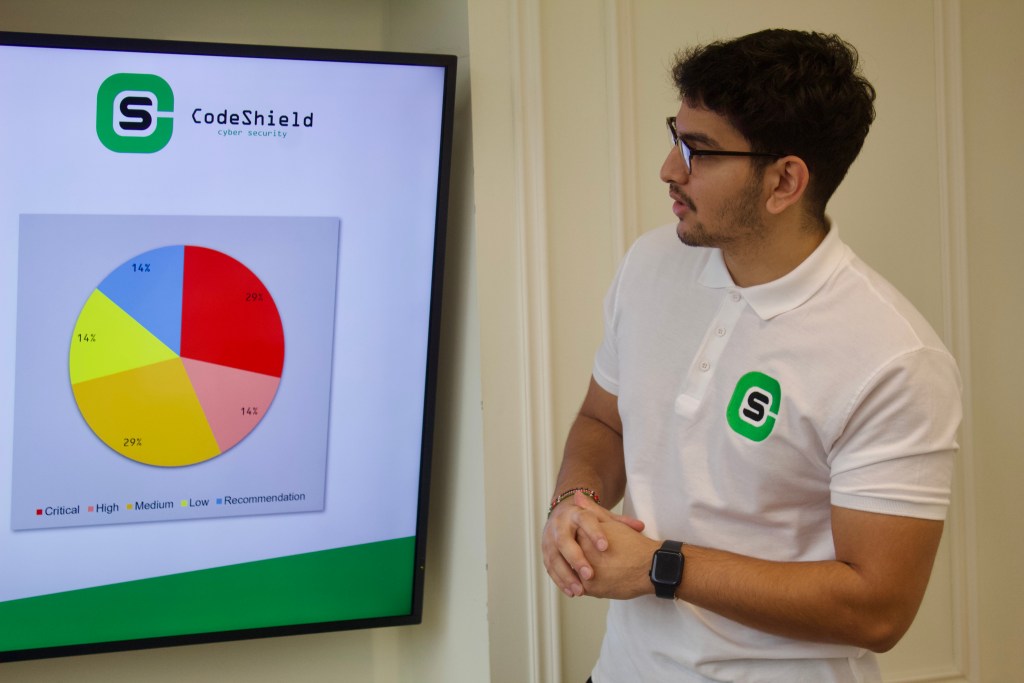

At CodeShield, the focus is on delivering practical and effective AI penetration testing strategies that help organisations secure their systems and stay ahead of evolving threats.

Conclusion & Author:

AI penetration testing is becoming a critical part of modern cybersecurity. As organisations continue to adopt artificial intelligence, the need to secure these systems will only increase.

By understanding the risks, applying the right techniques, and following best practices, businesses can protect their data, systems, and operations. A proactive approach to AI security testing ensures that vulnerabilities are addressed before they become serious

Have a different question?

Speak to a security expert today: